Spec-Driven AI: Governed, Scalable Enterprise Intelligence

Artificial intelligence has moved from experimentation to expectation.

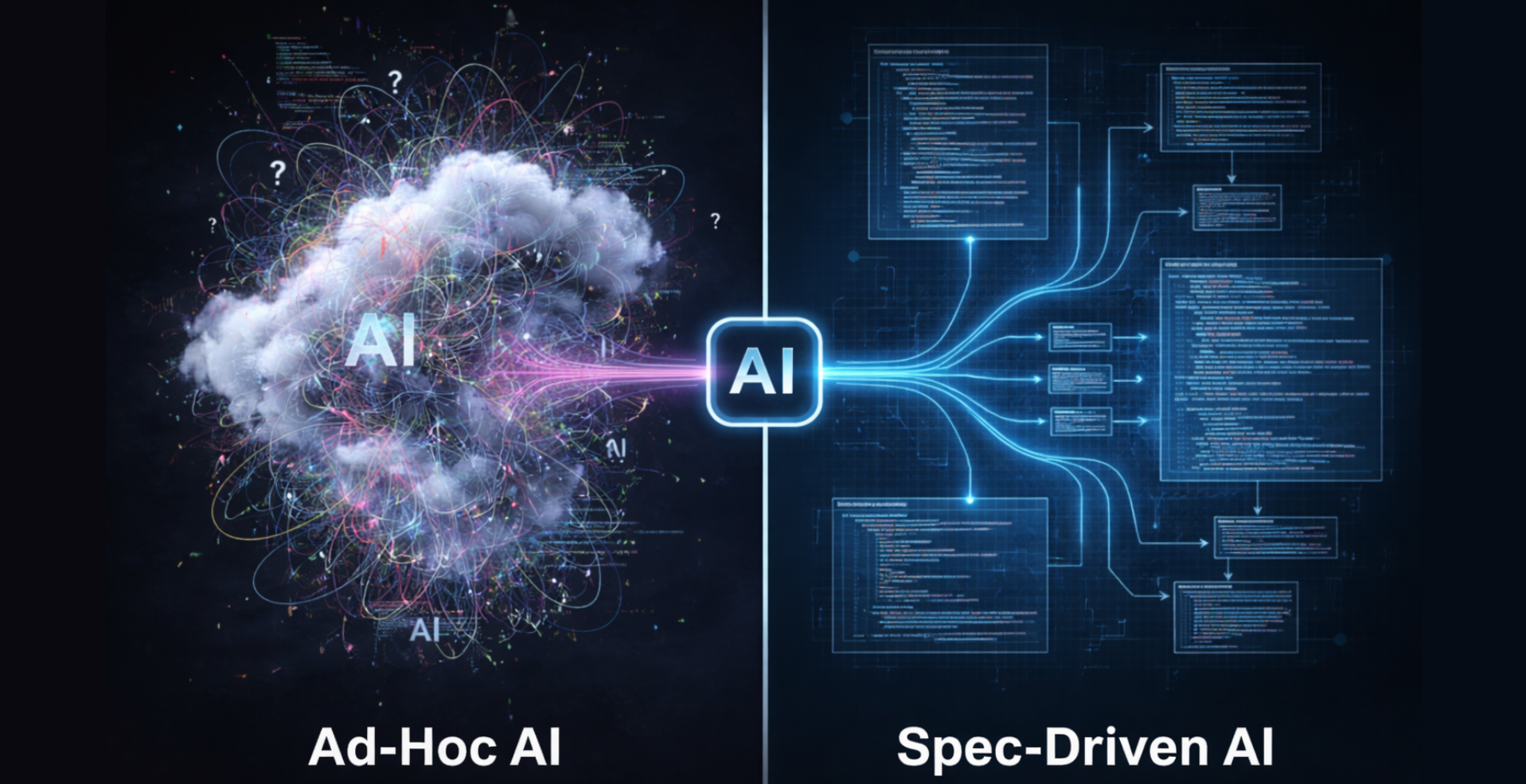

Over the past two years, enterprises rushed to deploy large language models, copilots, document intelligence systems, and early-stage agents. Many of those deployments delivered value. Many also revealed something uncomfortable: AI systems do not behave like traditional software. They are probabilistic, adaptive, and sensitive to context. When left loosely defined, they drift.

This realization marks a turning point.

The conversation inside boardrooms has shifted from “How do we use AI?” to “How do we control, govern, and scale AI safely?”

That shift is what is driving the rise of spec-driven AI development.

Spec-driven AI is not a trend built on buzzwords. It is an architectural discipline emerging from real production lessons. It reflects maturation of AI from experimentation to infrastructure.

At ThirdEye Data, across our AI readiness programs, governed document intelligence deployments, and workflow automation systems, we have seen a consistent pattern. The difference between fragile AI and enterprise-grade AI is not the model. It is the specification.

The Inflection Point: From Prompting to Engineering

Early generative AI deployments were prompt centric.

Teams focused on crafting instructions that produced acceptable responses. In controlled environments, this worked. But as systems scaled, weaknesses surfaced:

- Responses drifted in tone or reasoning.

- Edge cases produced inconsistent outcomes.

- Model upgrades altered behavior unexpectedly.

- Compliance teams lacked audit trails.

- Cost and token usage became unpredictable.

- Agents began acting outside intended workflow boundaries.

None of these failures stemmed from poor models. They stemmed from insufficient system definition.

Traditional software engineering matured decades ago around contracts. APIs have schemas. Services have SLAs. Security layers have policies. Changes are versioned. Tests enforce behavior.

AI systems must now undergo the same discipline.

Spec-driven AI development formalizes how AI systems are expected to behave before they are deployed.

It treats AI outputs as governed, testable artifacts rather than hopeful responses.

What “Spec-Driven” Actually Means

In conventional software, a specification defines what the system should do. In AI systems, specifications must define not only the function but the behavior under uncertainty.

A mature AI specification includes multiple layers that have concrete answers to the specific questions:

Functional Specification

What task must the AI perform?

Example: Extract structured insurance claim fields from unstructured documents.

Behavioral Specification

How should the AI reason, respond, and structure output?

Should it be conservative in uncertain cases? Should it abstain if confidence is low?

Safety and Compliance Specification

What must never occur?

What regulatory language must be enforced?

What escalation triggers are required?

Interface Specification

What output schema must be respected?

What format must downstream systems rely on?

Evaluation Specification

How will correctness be measured?

What test cases define acceptable vs unacceptable behavior?

Operational Specification

What latency is acceptable?

What token or cost budget applies?

What logging and traceability are required?

When these layers are defined explicitly, AI systems become governable components rather than opaque black boxes.

Why Enterprises Are Moving in This Direction

Spec-driven AI is emerging because enterprise conditions demand it.

- Regulatory Pressure: Emerging frameworks such as the EU AI Act and sector-specific governance mandates require traceability, explainability, and risk classification. Informal prompting cannot satisfy audit scrutiny.

- Financial Risk: Uncontrolled AI behavior introduces legal exposure, brand risk, and remediation costs. A single compliance failure can erase months of productivity gains.

- Model Volatility: Foundation models evolve rapidly. Updates can alter response tone, structure, or reasoning patterns. Without specification and regression testing, upgrades become risky.

- Agentic Systems: Autonomous or semi-autonomous agents compound unpredictability. When AI begins to initiate actions across workflows, behavioral constraints become essential.

These pressures are not theoretical. They are visible in production deployments across industries.

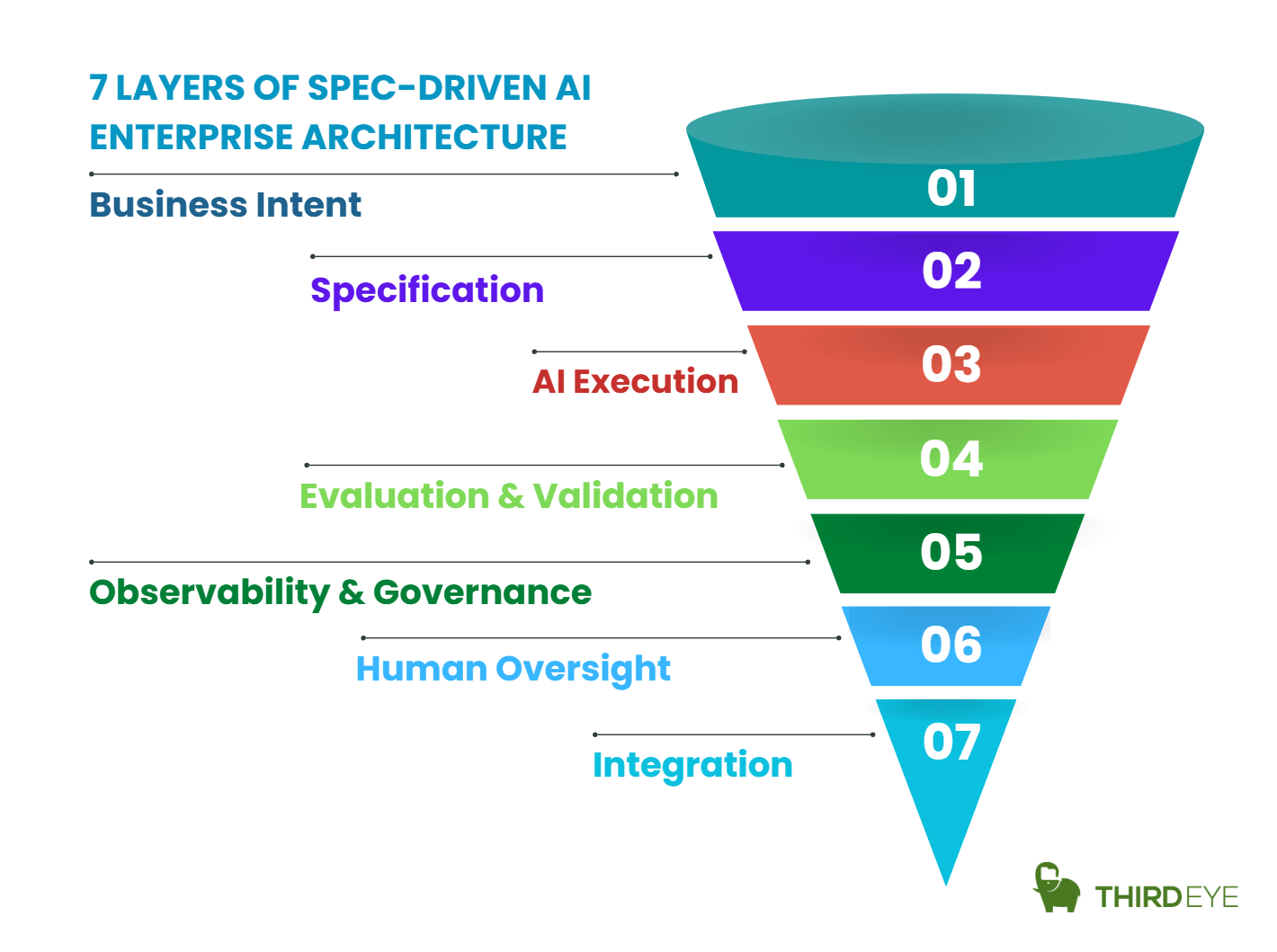

The Architecture of a Spec-Driven AI System

A spec-driven system is not a prompt wrapped in an API. It is an orchestrated architecture.

A typical enterprise pattern includes:

Specification Layer

- Behavioral contracts

- Schema definitions

- Guardrail rules

- Compliance constraints

Model Abstraction Layer

- Decoupling business logic from specific LLM vendors

- Allowing model replacement without behavioral drift

Retrieval and Context Layer

- Controlled data access

- Policy-bound document retrieval

- Source attribution requirements

Evaluation Harness

- Automated test cases

- Benchmark datasets

- Drift detection mechanisms

Observability and Logging Layer

- Prompt version tracking

- Output lineage

- Performance metrics

- Escalation flags

Human Oversight Layer

- Validation checkpoints

- Review workflows for high-risk decisions

In our document intelligence deployments, this layered approach allowed systems to maintain stable performance across model upgrades and regulatory reviews. The difference was not model capability. It was an architectural discipline.

Evaluation as a First-Class Citizen

One of the defining characteristics of spec-driven AI is evaluation before deployment.

Evaluation moves beyond ad hoc testing. It becomes continuous.

Enterprises should define:

- Structured test datasets

- Edge case scenarios

- Negative test conditions

- Stress tests for ambiguous input

- Safety violation checks

- Tone and language consistency tests

In CI/CD pipelines, AI behavior must be regression tested just like traditional code.

This principle has become foundational in our AI Readiness engagements. Organizations frequently underestimate how quickly AI behavior can shift without explicit evaluation pipelines.

Specification without evaluation is documentation. Specification with evaluation is engineering.

Governance and Spec-Driven AI Development

AI governance is often treated as a policy conversation. In reality, governance must be operationalized.

Spec-driven AI enables governance through:

- Versioned behavioral definitions

- Change impact analysis

- Risk classification mapping

- Audit trails

- Escalation triggers

Within enterprise AI Governance programs, we see a common maturity progression:

- Informal experimentation

- Documented prompts

- Centralized review

- Behavioral contracts

- Governed AI portfolio management

The shift from level 2 to level 4 is where enterprises begin to reduce risk meaningfully.

Without specification, governance remains aspirational.

Cost and Financial Predictability

We have seen CIOs increasingly evaluate AI not as innovation spend but as operating expenditure.

Spec-driven systems improve financial discipline by:

- Defining token budgets

- Enforcing cost thresholds

- Monitoring usage anomalies

- Enabling controlled scaling

In one workflow automation deployment, introducing structured evaluation and budget controls reduced monthly token expenditure variance significantly without sacrificing performance.

Financial predictability is rarely discussed in AI marketing material. It becomes critical in enterprise operations.

Organizational Implications

Spec-driven AI changes team structure.

Enterprises begin to require:

- AI Product Owners responsible for behavioral definitions

- AI Architects defining system constraints

- Governance reviewers embedded early in design

- Evaluation engineers maintaining test harnesses

Prompt engineers alone cannot sustain enterprise systems.

AI becomes a product discipline.

Real-World Patterns from Production Deployments

Based on our experience, we strongly state that specification has proven essential across different domains.

Document Intelligence

Unstructured document processing systems must extract structured data with high reliability. Without strict output schemas and fallback rules, integration with downstream ERP or claims systems fails.

Specification ensures:

- Deterministic field mapping

- Confidence scoring thresholds

- Human validation triggers

Safety Monitoring and Hazard Detection

Visual AI systems deployed in industrial environments require conservative bias. False negatives may be unacceptable. Behavioral specs define escalationthresholds and override rules.

Workflow Automation and Agentic Systems

When AI coordinates multi-step processes, specifications define boundaries:

- What actions are permitted

- What decisions require human approval

- What audit logs must be generated

These systems become manageable only when autonomy is explicitly bounded.

A Production Case: Spec-Driven Document Intelligence in a Regulated Environment

To illustrate how spec-driven AI moves from theory to enterprise-grade execution, consider a real-world pattern we frequently encounter: intelligent document processing in a regulated industry.

Business Context

An enterprise needed to automate extraction and validation of structured data from high-volume, semi-structured documents. These documents directly influenced downstream operational decisions and regulatory reporting.

The initial pilot worked well using prompt engineering. However, once scaled:

- Edge cases produced inconsistent field outputs

- Confidence thresholds were unclear

- Model upgrades altered extraction structure

- Compliance teams requested traceability of reasoning

- Integration systems required deterministic schemas

The challenge was not model accuracy. It was architectural rigor.

This is where spec-driven AI fundamentally changed the system design.

Step 1: Formalizing the Behavioral Specification

Instead of iterating prompts informally, the team defined an explicit AI contract consisting of:

Functional Contract

- Extract 32 predefined fields

- Normalize values into structured JSON

- Map document variations into standardized taxonomy

Behavioral Contract

- If extraction confidence < defined threshold → mark as “Needs Review”

- Never infer missing financial values

- Flag ambiguous entity matches rather than guessing

Compliance Contract

- Enforce regulatory terminology mappings

- Avoid free-form commentary in output

- Log justification snippets for sensitive fields

Interface Contract

- Strict JSON schema validation

- Field-level validation rules

- Null-handling protocol defined

This specification became version-controlled.

The AI system was no longer defined by a prompt. It was defined by a contract.

Step 2: Introducing a Model Abstraction Layer

Rather than embedding vendor-specific prompt logic across services, a model abstraction layer was introduced.

This layer:

- Encapsulated prompt templates

- Handled structured output formatting

- Managed fallback behavior

- Allowed model replacement without rewriting business logic

When foundation models were upgraded, regression testing validated behavior against the specification before production release.

This prevented silent drift.

Step 3: Building the Evaluation Harness

A dedicated evaluation harness was implemented with:

- A benchmark dataset covering common and edge-case documents

- Negative test scenarios (missing fields, ambiguous values)

- Schema validation tests

- Confidence threshold validation

- Drift detection comparing outputs across model versions

Evaluation was integrated into CI/CD.

Every specification update or model change triggered automated validation.

This transformed AI deployment from experimental release to controlled rollout.

Step 4: Governance and Observability Integration

To satisfy compliance and audit requirements:

- Every output stored version ID of behavioral specification

- Model version metadata logged

- Confidence scores recorded

- Escalations tracked

- Human overrides documented

During regulatory reviews, the enterprise could demonstrate:

- Why a value was extracted

- Under which behavioral version

- With what confidence threshold

- And whether human intervention occurred

That level of traceability is impossible without specification discipline.

Step 5: Human-in-the-Loop Boundaries

Instead of full automation, bounded autonomy was implemented:

- High-confidence outputs auto-processed

- Medium-confidence routed to validation queue

- Low-confidence blocked and escalated

This preserved efficiency while managing risk exposure.

The document handling process automation became controlled, not reckless.

Results of the Spec-Driven Approach

The impact was measurable:

- Reduction in downstream data reconciliation errors

- Stable behavior across model upgrades

- Reduced compliance escalations

- Predictable integration with ERP and reporting systems

- Controlled scaling without architectural rework

Most importantly, AI transitioned from pilot success to operational infrastructure.

The key enabler was not a better model.

It was a better specification.

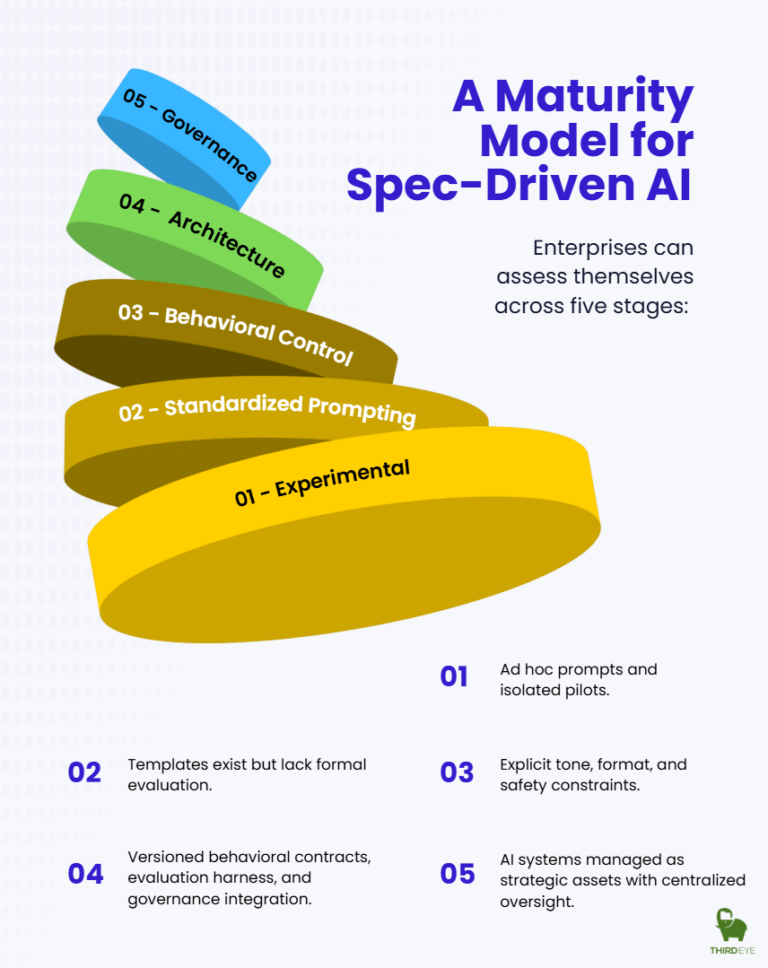

A Maturity Model for Spec-Driven AI

Spec-Driven AI does not emerge overnight. It reflects a gradual architectural evolution in how organizations design, control, and scale AI systems.

- At Level 1 (Experimental), AI is exploratory. Teams run isolated pilots, prompts live in notebooks or chat histories, and success is measured by novelty rather than repeatability. There is little coordination and virtually no structural governance. AI is interesting but not yet operational.

- At Level 2 (Standardized Prompting), some discipline appears. Organizations introduce shared templates, internal prompt libraries, and light usage guidelines. However, behavior still lives inside prompts rather than in formal specifications. Evaluation remains subjective, and governance is advisory rather than embedded. Many enterprises remain at this stage: structured, but not architected.

- At Level 3 (Behavioral Control), constraints become explicit. Tone, format, and safety guardrails are defined more rigorously. Testing workflows emerge, and monitoring becomes intentional. Yet the system still depends heavily on prompt engineering. Behavioral intent is not fully decoupled from implementation, which limits portability and long-term resilience.

- The structural shift occurs at Level 4 (Spec-Driven Architecture). Here, AI behavior is defined through versioned specifications, not just prompts. A formal specification layer sits between business intent and model execution. Evaluation harnesses are automated, governance is embedded into architecture, and traceability becomes native. AI systems at this level are testable, auditable, and modular. They can evolve without losing behavioral integrity.

- At Level 5 (Governed AI Portfolio), AI is no longer treated as a collection of use cases. It is managed as a strategic enterprise asset. Specifications are centrally governed, risk and compliance are integrated at the portfolio level, and AI initiatives align directly with enterprise architecture and long-term strategy. At this stage, AI is infrastructure, not experimentation.

The movement from Level 2 to Level 4 is transformative because it represents a shift from operational discipline to architectural discipline. It is the difference between organizing prompts and engineering systems. One improves consistency. The other creates durability.

Why This Matters for the Future of Agentic AI

The next wave of enterprise AI will involve multi-agent orchestration and semi-autonomous decision systems.

Without specification:

- Agents may act outside intent.

- Workflow chains may compound errors.

- Accountability may blur.

Spec-driven foundations make agentic systems viable. They establish boundaries before autonomy expands.

The Strategic Implication for Enterprises

Spec-driven AI reframes artificial intelligence from experimentation to infrastructure.

It aligns AI development with:

- Software engineering rigor

- Regulatory compliance

- Financial governance

- Operational reliability

For CIOs and CTOs, this shift is not optional. It defines whether AI remains a controlled asset or becomes an unmanaged liability.

Enterprises that invest in specification discipline now will scale faster later.

Those that do not will spend more time correcting drift than creating value.

Final Reflection

AI capability is no longer the bottleneck.

Architectural maturity is.

Spec-driven AI development represents the next stage of enterprise intelligence engineering. It transforms AI from a probabilistic experiment into a governed, testable, scalable system.

This is not about restricting AI. It is about making AI reliable enough to trust.

And in enterprise environments, trust is the foundation of scale.

Table of Content

- Overview

- The Inflection Point

- What is Spec-Driven AI

- Enterprises Shifting to Spec-Driven AI

- Spec-Driven AI Enterprise Architecture

- Evaluation as a First-Class Citizen

- Governance and Spec-Driven AI Development

- Cost & Financial Predictability

- Organizational Implications

- Real-World Patterns

- A Production Case

- A Maturity Model for Spec-Driven AI

- Why This Matters for the Future of Agentic AI

- The Strategic Implication for Enterprises

- Final Reflection