The Hidden Cost of AI: Why Model Inference is the Real Battleground

The spotlight in AI is always on the breakthrough—the massive model trained; the world record is broken. But for businesses, the real challenge begins after the applause dies.

Once your AI model is trained, it becomes a product. And like any product, it has to perform, scale, and, most importantly, be affordable. The moment that trained models start making real-world predictions—what we call inference—is where AI systems often hit a wall of latency, complexity, and staggering cost.

At ThirdEye Data, we’ve seen countless production systems fail not because the model was bad, but because the economics of running it simply didn’t work. The answer to this scaling nightmare? Amazon EC2 Inf1 Instances.

Inf1: Shifting the Economics of Production AI

Forget the traditional graphics cards (GPUs) that are great for trainingbut become wildly expensive when simply servingpredictions 24/7. Inf1 instances, powered by the custom-designed AWS Inferentiachip, are laser-focused on one thing: inference at scale, with maximum efficiency.

This is a complete game-changer:

- Up to 70% Lower Cost per Inference:This isn’t just a saving; it’s a difference that allows you to deploy high-volume AI where it was previously cost-prohibitive. Think about serving millions of daily chatbot queries or processing video feeds around the clock—now, you can afford it.

- Up to 2.3x Higher Throughput:More requests, handled faster. Millisecond latency is not a luxury; it’s a requirement for real-time applications like instant recommendations or voice commands.

In essence, Inf1 instances are the workhorses of the AI factory floor. They take cutting-edge research and transform it into practical, scalable business value.

Under the Hood: A Chip Built for Speed

Inf1 isn’t running on repurposed hardware; it’s an architecture meticulously crafted for deep learning inference.

Imagine the AWS Inferentiachip as a specialized engine. It contains multiple NeuronCores, which are like high-speed assembly lines, optimized specifically for the matrix and tensor math that forms the backbone of deep learning. By using the AWS Neuron SDK, we can take models from PyTorch, TensorFlow, or MXNet and “bake” them directly onto the chip, ensuring peak performance.

The result is a streamlined pipeline: less data shuffling, less energy wasted, and near-instant responsiveness—a true requirement for modern applications like:

- Real-time Conversational AI:Zero lag for customer support bots and virtual assistants.

- Dynamic Recommendation Engines:Delivering the perfect product suggestion instantly.

- Video Analytics:Processing thousands of video frames per second for security or quality control.

A Tale of Two Chips: Trainium vs. Inferentia

Understanding Inf1 means understanding the full AI lifecycle built by AWS:

| AI Lifecycle Stage | AWS Instance Type | Purpose | The Human Analogy |

| Model Training | Trn1 (Trainium) | Building and refining the model. | The Research & Developmentteam. |

| Model Inference | Inf1 (Inferentia) | Deploying the model to serve predictions. | The Mass Productionline. |

At ThirdEye Data, we leverage this synergy to offer a truly end-to-end ML pipeline. We can train your massive Language Model (LLM) efficiently on Trn1 andthen deploy the production version on Inf1at a fraction of the cost and latency.

This coordinated approach eliminates the friction and cost that usually plagues the journey from lab-to-production.

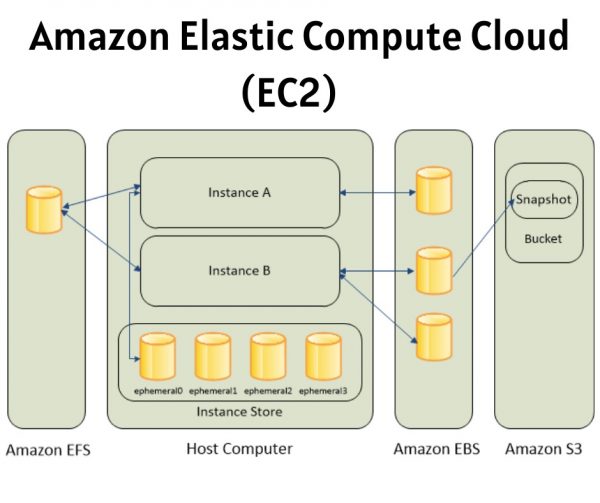

Amazon EC2 Cloud

Image Courtesy: amazon

Where the Dollars and Sense Meet: Use Cases Where Inf1 Shines

If Trainium (Trn1) is the budget-friendly engine for training, Inferentia (Inf1)is the definitive solution for reducing the single largest operational expense in AI: serving predictions at scale. This is where inference cost drops dramatically, transforming AI from a massive capital drain into a cost-efficient business utility.

The Enterprise Inference Revolution

- Conversational AI & Chatbots:The moment of truth for customer service bots and voice assistants is instant response. Inf1 provides the ultra-low latency needed to deploy complex, fine-tuned LLMs and intent-recognition models in real-time applications, making conversations feel seamless and human.

- Hyper-Personalized Recommendation Systems:To drive sales, recommendations must be dynamic and instantaneous. Inf1 runs the complex inference logic for modern e-commerce and streaming platforms, ensuring users get the perfect, real-time product or content suggestionwith high throughput.

- Real-Time Computer Vision:From detecting defects on a high-speed manufacturing line to analyzing retail foot traffic or managing smart surveillance, Inf1 enables real-time video analytics. It processes massive images and video streams with the speed and efficiency required for instant operational decisions.

- Speech & Audio Recognition:For modern enterprise automation, fast and accurate transcription and voice command recognition are essential. Inf1 provides the necessary processing power to deliver ultra-fast audio output, powering virtual agents and transcription services reliably.

- Generative AI Inference:Serving large diffusion models (for images) or transformer models (for text) is resource intensive. Inf1 optimizes the deployment of these Generative AI systems, allowing enterprises to offer custom content creationwith industry-leading cost-performance.

Strategic Comparison: The Inference Cost-Efficiency Contest

When choosing deployment hardware, the math is simple: lower cost per prediction wins. Inferentia’s custom design gives it an unbeatable advantage in this critical area.

| Feature | Inf1 (Inferentia) | G4dn (NVIDIA T4) | G5 (NVIDIA A10) |

| Primary Purpose | Inference Excellence | Inference | Inference/Training |

| Cost Efficiency | ★★★★★ (The best) | ★★★☆☆ (Moderate) | ★★★★☆ (Good) |

| Latency | Ultra-low | Moderate | Low |

| Energy Efficiency | Highest | Medium | Medium |

The Takeaway:While NVIDIA GPUs (G4dn/G5) offer a familiar, mature ecosystem, Inferentia’s native optimization provides unmatched cost-performance for large-scale inference workloads.For enterprises running demanding AI systems in production 24/7, the cost savings of Inf1 directly impact the bottom line.

Challenges: Overcoming Deployment Hurdles

Moving a model from a GPU-based training environment to a custom inference chip requires a calculated strategy. We prepare our clients for these minor, navigable hurdles:

- Framework Adaptation:Models built on familiar GPU stacks may require conversion via standard tools like ONNX or minor adjustments to align with the Neuron compatibility layer.

- The Neuron Learning Curve:Similar to Trainium, teams need a dedicated understanding of the Neuron SDKto unlock the chip’s full efficiency. This is a crucial, but manageable, MLOps skill gap.

- Limited GPU-Specific Features:CUDA-optimized functions, while powerful, will not directly translate to Inferentia. Deployment requires a commitment to using the hardware-agnostic functions or specific Neuron tools.

ThirdEye Data’s Edge:

We transform these challenges into a streamlined process. Our MLOps teams utilize custom model wrappers and automated Neuron pipelines, allowing for seamless and rapid model migration from GPU to Inf1. We ensure your model moves effortlessly to production while maintaining performance parityand drastically cutting long-term serving costs.

Frequently Asked Questions:

- What are Amazon EC2 Inf1 instances?

Amazon EC2 Inf1 instances are AWS compute instances powered by AWS Inferentia chips, custom-built for machine learning inference (prediction stage). They deliver high throughputand low latencyat up to 70% lower costcompared to GPU-based instances.

- How are Inf1 instances different from Trn1 or GPU instances?

- Inf1 = Inference (prediction)

- Trn1 = Training (model building/tuning)

- GPU = General-purpose acceleration (training or inference, but more expensive)

Inf1 is optimized specifically for production-scale inference, making it faster and more cost-effective than GPUs.

- What types of AI models can run on Inf1?

Inf1 supports a wide range of deep learning models including:

- NLP (BERT, GPT-style models, translation)

- Computer Vision (ResNet, YOLO, object detection)

- Recommendation systems

- Speech recognition

- Generative AI inference models

- Do I need to change my code to use Inf1?

Minimal changes are required. You use the AWS Neuron SDKto compile your existing PyTorch, TensorFlow, or ONNX models to run on Inferentia. Most models can be migrated with just a few lines of modification.

- What frameworks does Inf1 support?

PyTorch

TensorFlow

Hugging Face Transformers

ONNX Runtime

All supported via the AWS Neuron SDK.

- How much performance improvement can I expect?

Depending on the model, you can achieve:

- Up to 2.3x higher throughput

- Up to 50% lower latency

- Up to 70% lower cost per inference

Compared to GPU instances such as G4 or G5.

- Can Inf1 scale for large workloads?

Yes! Inf1 instances support:

- Multiple Inferentia chips per instance (up to 16)

- High network bandwidth (up to 100 Gbps)

- Integration with ECS, EKS, and SageMakerfor auto-scaling

Inf1 is built for REAL production environments, not just small tests.

- Is it hard to optimize models for Inf1?

Not really. AWS provides tools like:

- Neuron Compiler(to optimize models)

- Neuron Runtime(to run them efficiently)

- Neuron Profiler(to tune performance)

Once your first model is set up, future deployments become much faster.

- Can Inf1 be used with SageMaker?

Absolutely. SageMaker has built-in supportfor Inf1 instances.

You can deploy endpoints using Inf1 directly, or compile models using SageMaker Neofor even better performance.

- Why should my business consider Inf1?

Choose Inf1 if you need:

- Scalable real-time AI

- Lower inference costs

- Better performance than GPUs

- Enterprise-grade reliability

- Seamless integration with AWS AI pipeline

At ThirdEye Data, we recommend Inf1 for clients who want to run AI in production at scale without breaking the bank.

Moving Beyond the Hype

While Inf1 offers unmatched cost-efficiency for large-scale inference, it’s not a drop-in replacement for every scenario. It requires expertise in the Neuron SDKto correctly convert and optimize models.

This is where ThirdEye Data steps in.We’ve already done the heavy lifting, building the custom model wrappers and automated Neuron pipelinesnecessary to ensure a smooth, high-performance migration. We bridge the gap between your existing model and the new, cost-optimized Inferentia architecture.

Inf1 is more than just another instance type. It’s the platform that makes real-time, scaled AI a sustainable business reality. By mastering the full Trn1 + Inf1stack, we ensure your AI isn’t just smart—it’s affordable, fast, and ready for the future.