Supercharge AI Training Speed with Amazon EC2 Trn1 InstancesSupercharge AI Training Speed with Amazon EC2 Trn1 InstancesThe AI Time Crunch: Why Training Speed is the Ultimate Competitive Edge

In the era of Generative AI, time is the new cloud currency.

Every business wants a massive language model, a perfectly tuned recommendation engine, or a zero-defect computer vision system. But the journey to creating those is often a costly, grueling race against the clock. Waiting weeks for a model to train—only to find a tiny error that forces you to start over—is the hidden killer of innovation and budget.

At ThirdEye Data, we see that pain daily. Training speed isn’t a technical footnote; it’s the single biggest factor dictating your time-to-market.

The solution for breaking this bottleneck? Amazon EC2 Trn1 Instances.

Trn1: Killing the GPU Bottleneck for Good

Trn1 instances are not AWS’s attempt to copy existing hardware; they are a fundamental reimaginingof what deep learning computers should look like.

Built from the ground up, Trn1 is powered by the AWS Trainiumchip—custom silicon designed specifically to accelerate the math behind modern AI. This isn’t hardware for general computing; it’s a hyper-specialized engine for deep learning training.

The Strategic Advantage:

- Up to 50% Lower Training Costs:Imagine cutting your cloud training bill in half. This saving doesn’t just lower expenses; it allows you to run twice as many experiments, accelerating your R&D cycles exponentially.

- Unmatched Scalability:Trn1 uses the Elastic Fabric Adapter (EFA)to link up to 10,000 Trainium accelerators together. This means you can scale the training of a massive, multi-billion-parameter model almost linearly, without the typical communication overhead that kills distributed training performance.

- Built for Modern Models:The hardware is optimized for the Transformer architectures(BERT, GPT, T5) that dominate modern AI, drastically speeding up the complex attention mechanismsat their core.

Trn1 is the high-octane fuel for your enterprise AI engine.

Amazon EC2 Trn1 Computation

Image Courtesy: amazon

Under the Hood: Designed for Deep Learning’s Core Problems

The magic lies in the Trainium NeuronCores. These are specialized processors that handle tensor operations—the heartbeat of deep learning—with an efficiency GPUs can’t match for this specific task.

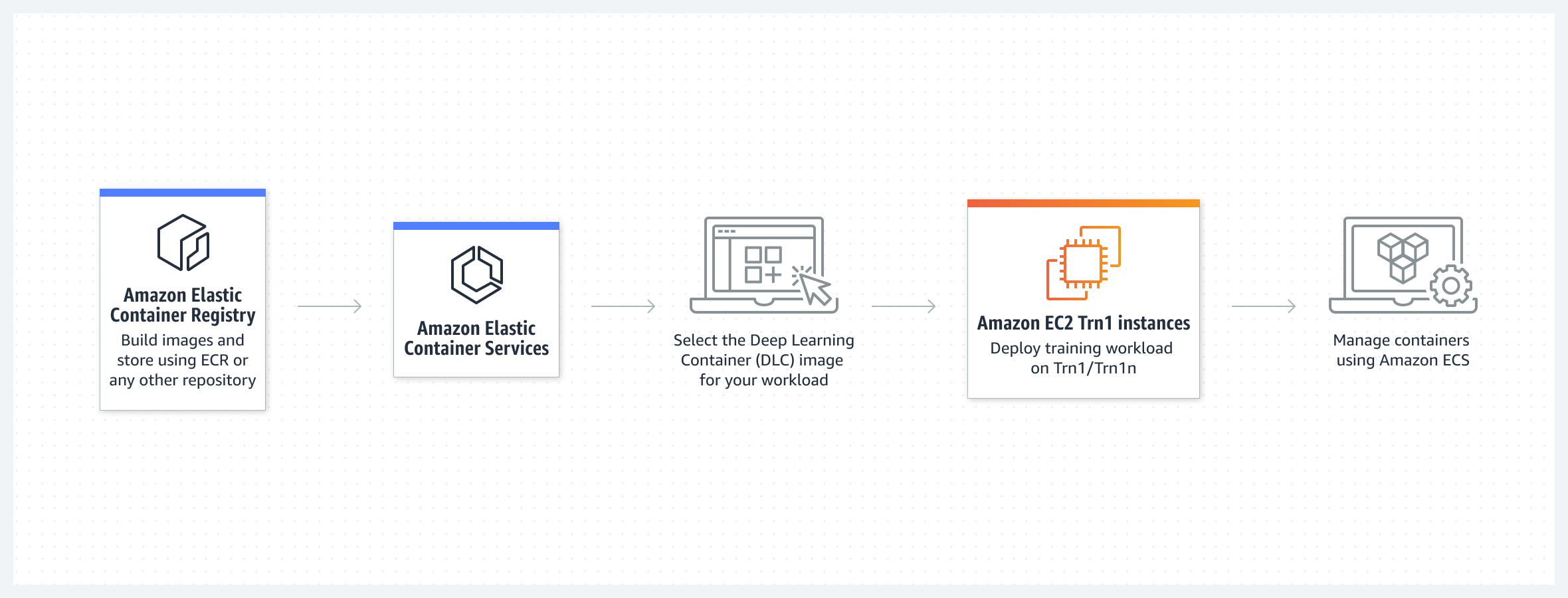

Crucially, AWS provides the Neuron SDK, a comprehensive software toolkit that acts as the translator. It allows our engineers to take standard models written in PyTorch or TensorFlow, compile them, and optimize them to run flawlessly on Trainium’s hardware.

The core result is an infrastructure that supports:

- Ultra-Low Latency Communication:Second-generation EFA ensures data flows seamlessly between chips, even across hundreds of instances.

- Flexible Precision:Support for BF16 (Brain Float 16) and even FP8 allows us to balance speed and model accuracy perfectly, minimizing training time without sacrificing quality.

In simple terms, Trn1 eliminates the traditional trade-off: you no longer have to choose between speed, cost, and scale.

Case in Point: Why Trn1 is Crucial for Enterprise LLMs

The generative AI boom has made LLMs essential, but training a custom LLM is prohibitively expensive for most companies.

With Trn1, ThirdEye Data can help clients fine-tune, pre-train, or adaptopen-source models (like Llama or Falcon) on their proprietary data faster and cheaper than ever.

Think about the difference this makes:

- Instead of spending a week and $50,000 fine-tuning a model on GPUs, you spend two days and $25,000on Trn1.

- This extra budget and time can be immediately reinvested into running more advanced experiments or deploying the model faster.

From accelerating real-time personalization enginesfor e-commerce to enabling rapid genomic analysisin healthcare, Trn1 transforms AI from a slow research endeavor into a decisive, competitive advantage.

Where the Rubber Meets the Road: Real-World AI Acceleration with Trn1

The theoretical power of Trainium (Trn1) translates directly into groundbreaking enterprise applications. Its capabilities are particularly transformative in scenarios where model size and training time directly impact competitive advantage.

Core Applications Driving Enterprise AI Transformation

- Large Language Model (LLM) Training:Trn1 makes it economically viable for the enterprise to own its LLM destiny. We can now cost-efficiently fine-tune massive models(like Llama, Falcon, or custom GPT-like architectures) on your unique, proprietary business data. This turns a generic model into an enterprise expert.

- Computer Vision at Speed:For industries like manufacturing, security, and retail analytics, where every second counts, Trn1 delivers. It enables faster distributed training for complex image classification, segmentation, and detection models, accelerating product rollout and defect detection pipelines.

- Next-Gen Recommendation Systems:In the competitive e-commerce and media space, personalization is paramount. High-throughput training on Trn1 helps organizations rapidly optimize and retrain real-time personalization engines, ensuring customer recommendations are always fresh, relevant, and profitable.

- Healthcare and Life Sciences Breakthroughs:For R&D-heavy organizations, time is literally the most critical resource. Trn1 accelerates the training cycle for complex genomic sequencing and medical imaging models, slashing research timelines and speeding up diagnostic development.

- Enterprise Generative AI:When deployed via Amazon SageMakerand optimized by ThirdEye Data’saccelerators, Trn1 becomes the foundational enabler for advanced generative AI systems—from bespoke, secure internal chatbots to custom text-to-image models.

Strategic Insight: Where Trn1 Fits in the Cloud Compute Stack

To understand Trn1’s place, you must compare it to the existing heavyweights. Trn1 is not merely a replacement; it’s a dedicated evolution aimed squarely at training efficiency.

| Feature | Trn1 (AWS Trainium) | P4d (NVIDIA A100) | Inf2 (AWS Inferentia2) |

| Primary Purpose | Model Training | Model Training | Model Inference (Deployment) |

| Max Bandwidth | 800 Gbps | 400 Gbps | 800 Gbps |

| Cost Efficiency | Up to 50% cheaperfor training | Moderate | Highest(for inference) |

While P4d instances offer immense parallel processing power and remain critical for certain GPU-accelerated workloads, Trn1 is the next logical stepfor organizations whose top priority is reducing the immense cost and energy consumption associated with large-scale, continuous model training.

Challenges: Navigating the New Frontier

No major technology leap is friction-free. As early adopters, we acknowledge the initial hurdles, but we have the solutions ready:

- The Neuron SDK Learning Curve:Trainium utilizes the proprietary Neuron SDK and compiler. This means developers must get comfortable with a new set of tools. This is less of a technical limitation and more of an engineering resource investmentchallenge.

- Framework Adaptation:While AWS provides robust support for major frameworks like TensorFlow and PyTorch, more specialized or older custom frameworks may require a small amount of code adaptation to fully leverage the hardware’s efficiency.

- Ecosystem Maturity:The Trainium ecosystem is robust and rapidly evolving, but it is naturally younger than the decades-old NVIDIA CUDA framework. This means certain niche community resources or deep library integrations might still be developing.

Our Mitigation Strategy:

At ThirdEye Data, we view these challenges as solvable engineering problems. Our experts integrate custom optimization layersand provide MLOps wrappers that effectively mask the complexity of the Neuron SDK, ensuring our clients’ demanding workloads are seamlessly portable to Trn1 environmentswithout requiring costly, full-scale codebase rewrites.

Overcoming the Learning Curve: Our Expertise is Your Accelerator

Any disruptive technology comes with integration challenges. The main hurdle with Trn1 is getting comfortable with the new Neuron SDKecosystem.

That’s where ThirdEye Data’s core value lies.

Our AI engineers have built the custom optimization layers, code wrappers, and automated deployment pipelines necessary to make Trn1 integration seamless. We handle the:

- Model Porting:Converting your existing models to be Neuron-compatible.

- Performance Profiling:Using the Neuron Profiler to identify and eliminate every bottleneck.

- SageMaker Integration:Setting up managed, distributed training workflows that scale effortlessly.

For our clients, adopting Trn1 is not a painful IT project; it’s an immediate, high-ROI switch to a more efficient computer paradigm.

Frequently Asked Questions:

- What is Amazon EC2 Trn1 Instances?

Amazon EC2 Trn1 instances are purpose-built computer instances optimized for high-performance machine learning training. They are powered by AWS Trainium chips, designed to accelerate the training of large-scale deep learning models at a lower cost.

- How do Trn1 instances differ from GPU-based instances like P4 or P5?

Trn1 instances use Trainium accelerators optimized specifically for ML training, while P4/P5 GPU instances are general-purpose accelerators. Trn1 offers higher throughput and lower cost per training job, especially for Transformer-based and large language models.

- What types of workloads are best suited for Trn1?

Trn1 instances are ideal for training large language models, generative AI, deep learning models with billions of parameters, and distributed training workloads using frameworks like PyTorch or TensorFlow.

- What performance advantages do Trn1 instances offer?

They offer up to 50% lower training costs and significantly faster training times due to advanced features like high-speed NeuronLink interconnect, large-scale networking (up to 1600 Gbps), and specialized hardware acceleration for matrix operations.

- How do Trn1 instances handle distributed training?

Trn1 supports distributed training through NeuronLink and EFA (Elastic Fabric Adapter), enabling efficient communication between accelerators. This makes it possible to scale training across multiple instances with minimal latency.

- What is the Neuron SDK and why is it required?

The AWS Neuron SDK is a toolchain that allows machine learning frameworks like PyTorch and TensorFlow to run on Trainium hardware. It handles graph optimization, compilation, runtime orchestration, and model execution.

- How secure are Trn1 instances for enterprise workloads?

Trn1 instances are built on AWS Nitro System, providing hardware-level isolation, encrypted instance storage, and secure boot. This ensures compliance-grade security for sensitive training data and model assets.

- What are the memory and networking capabilities of Trn1?

Trn1 instances offer up to 512 GB of memory and ultra-high-speed networking of up to 1600 Gbps. This is critical for handling large batches, massive datasets, and multi-node training jobs.

- Can I migrate existing models to Trn1?

Yes. Most models built in PyTorch or TensorFlow can be migrated using the Neuron SDK. While minor code adjustments may be needed, AWS provides tools, documentation, and profiling support for smooth migration.

- When should organizations consider Trn1 over other instances?

Organizations should choose Trn1 when they need to train large or complex ML models more efficiently, reduce cost, scale training across clusters, or build advanced AI solutions such as LLMs, generative AI, or multimodal models.

The Future is Custom: Why AI Sovereignty Matters

The era of relying solely on general-purpose hardware is fading. By betting on custom silicon, AWS is giving companies more AI sovereignty—more control over their costs, performance, and strategic direction.

At ThirdEye Data, we view Trn1 as a pathway to operational excellence. It empowers our clients to be pioneers, allowing them to train bigger, smarter models faster and without the prohibitive cost barriers of the past.

The race in AI is no longer about who has the biggest model. It’s about who can train, iterate, and deploy that model with maximum efficiency. With Amazon EC2 Trn1 instances, you can stop waiting and start winning.